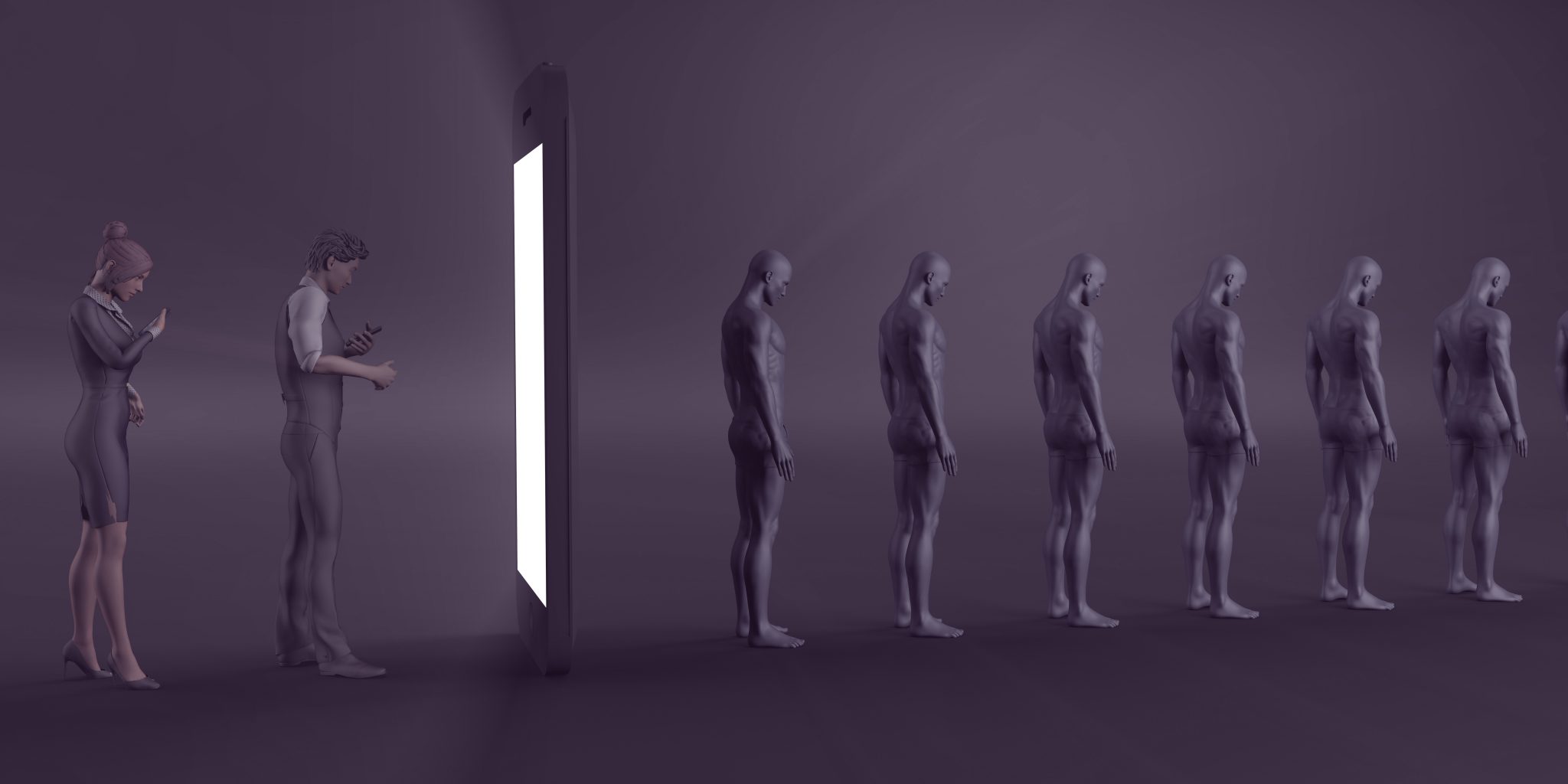

Past employees of major tech companies have openly admitted that they are employed to use manipulative and addicting tactics to keep users coming back to their app and increase screen time.

The practice of manipulating users, similar to how casinos do their best to keep you gambling, is something you may not be fully aware of but are certainly impacted by.

This is why Senator Josh Hawley of Missouri proposed the Social Media Addiction Reduction Technology Act (SMART Act). The goal of the new bill is to regulate and limit the intentionally addictive design and construction used in social media apps.

For example, infinite scroll is something that was developed to trick users into never knowing when a good stopping place is. Have you ever been at a wedding or restaurant and the waiter refills your wine glass before its finished. Drink a few sips and a minute later it is back full again. If you have experienced this you will likely remember that you one don’t have any idea of how much wine you drank and two likely drank more wine than you initially would have. This is the power of infinite scroll. A constantly refilling glass of social content, preventing you in knowing how much is enough.

Another example is swiping down to reload a page, this triggers a slot machine-like response. You get a nice sound, sometimes starts or an animation occurs and fresh, new content. These actions that you may not think twice about are the result of thousands of hours of testing by engineers. What can they do to keep you engaged and addicted to their app.

Lastly, another example is the snapstreak on Snapchat. Snapchat users have become unhealthily obsessed with snapstreaks. The idea that your friendship and worth is tied to this number is something Snapchat purposely implemented. They are, in essence, tying your friendship’s value to how often you two use the app.

To do this, the bill would require a whole host of changes for social media companies. Some key changes would be clear opt-in and opt-out options and time on app limited by default to 30 minutes per day with a warning pop up.

How Social Media Is Manipulating You

A recent study found that the most engaging content online is content that elicits moral outrage. If you look at the most recommended videos on YouTube and those that had the highest engagement the top keywords would be hates, debunks, destroys, obliterates, etc. The use of these keywords elicit moral outrage and is like crack for user engagement.

The federal government works to regulate and limit the use of addictive practices in cigarettes, alcohol, and gambling. There have been lengthy lawsuits into strategies from these industries for gaining new customers. Big tech should be no different.

If you think the ability for these tech companies to manipulate humans is at a peak you have only to look to AI and what companies like Google, Facebook, etc. are implementing.

Artificial intelligence can now detect your mood and determine whether you are depressed, anxious, excited, etc. While this could be used for good as potential suicide prevention, etc. The likelihood is far greater that it will be used to continue to manipulate and sell.

Picture Amazon’s Alexa in your house, listening to every word you say. Alexa picks up on your mood and analyzes it is because you recently went through a divorce. Suddenly, you start to get ads online and suggestions everywhere for dating sites, a new gym membership, etc. This is the next level of advertising, targetted at your life’s situational moments.

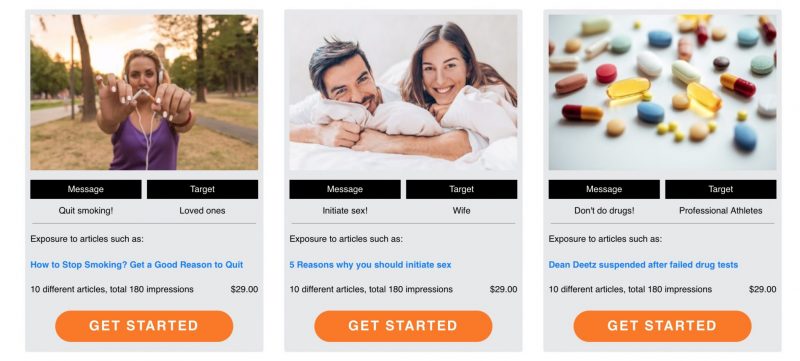

Think this type of marketing doesn’t work? You can test it out yourself. A company, The Spinner, provides a $29 service to show targetted ads to anyone you want. Do you want your wife to let you get a dog or to have sex with you more or to go to the gym more? There’s a package of advertising for that. Simply choose the package and pay $29 and your wife will be repeatedly shown ads for whatever you want to subconsciously urge her to do.

AI Used For Good – Detecting Depression In Teenagers & Kids

While the examples above certainly represent instances where AI can be used negatively to exploit someone, it also has incredible potential to do good.

A study out of the University of Vermont found that AI can detect depression in a child’s voice. Children are both hesitant to share if they are feeling depressed or anxious and in the younger ages don’t have the capability to fully express their emotions through words. This can make it hard to decipher the mental state of a child or a young kid.

The study asked children to tell a story where they would be judged on how interesting it is. Throughout the story AI measured the voice of the child while an onlooker gave neutral or negative expressions. Twice through the story, a buzzer would sound and the onlooker would tell the child how much time they had left. If this sounds stressful, that is the point of it. This test, the Trier-Social Stress Task is meant to elicit anxiety and stress.

Through this study, the AI used machine learning to detect signs of stress, anxiety, and depression in children’s voices. The potential for this use case or many others is tremendous for AI. AI is on the cutting edge of medical diagnoses such as early cancer detection.

With all great leaps in technological advancement and artificial intelligence certainly is one, there exists the potential to misuse the technology. We will have to wait and see if and how regulators will limit how tech companies use AI to their benefit or the benefit of their customers. At first glance, the vast majority of the current electorate do not fully understand these cutting edge technological advancements enough to properly regulate them.